Short on time? Read the Research Summary:

What Improves Productivity: An Evidence Review

Executive Abstract

Despite abundant advice, productivity remains poorly understood and frequently misdiagnosed. This research-driven review synthesizes evidence from cognitive psychology, organizational science, economics, and field experiments to examine what actually improves productivity—and why many popular interventions fail. The findings show that productivity is not driven by effort, motivation, or tools, but by execution design under cognitive constraints. Interventions that reduce fragmentation, minimize context switching, align autonomy with structure, and improve work environments produce durable gains in output quality and sustainability. In contrast, multitasking, extended working hours, excessive tooling, and pressure-based incentives often increase visible activity while degrading judgment, coordination, and long-term performance. The analysis reframes productivity as a decision discipline rather than a self-help problem, emphasizing system design, cognitive load alignment, and execution quality over intensity. This article offers leaders, managers, professionals, and researchers an evidence-based lens to evaluate productivity decisions before they fail and to distinguish signal from noise.

Why Productivity Advice Is Mostly Noise

Productivity has become one of the most written-about—and least clearly understood—topics in modern work. Books, blogs, podcasts, and platforms promise faster output, sharper focus, and effortless efficiency. Yet despite this explosion of advice, reported burnout continues to rise, attention is increasingly fragmented, and many organizations struggle to translate effort into sustained performance gains.

First, establish your baseline: Read our concise definition + P&L signals → What is Productivity: A Concise Explanation.

Why Noise Beats Evidence

This disconnect is not accidental. Productivity advice spreads quickly because it is intuitive, immediate, and reassuring. It favors simple narratives—work harder, optimize your mornings, adopt the latest tool—over validated explanations of how work actually gets done. Content that feels actionable travels faster than content that is correct, especially when outcomes are hard to observe and slow to materialize.

The cost of this noise is not merely wasted time. Poor productivity decisions accumulate real consequences: chronic fatigue mistaken for commitment, activity mistaken for progress, and systems overloaded in the name of efficiency. At the organizational level, these errors lead to misallocated resources, distorted incentives, and execution failures that are often blamed on individuals rather than design.

This article takes a different approach. It starts from three reframes supported by decades of research:

- Productivity is not working harder.

- Productivity is not using more tools.

- Productivity is the quality of output produced per unit of constrained attention.

From this perspective, productivity becomes a decision problem, not a motivation problem. Improvements depend less on intensity and more on how attention, execution, and systems interact under real constraints.

Methodologically, this review synthesizes findings from peer-reviewed research across cognitive psychology, organizational science, economics, and field experiments. Rather than offering tactics or prescriptions, it examines what evidence consistently shows works, what fails despite popularity, and why well-intentioned interventions often backfire.

The boundary is deliberate. This is not self-help, performance coaching, or tool advocacy. It is decision intelligence—aimed at clarifying how productivity should be understood, evaluated, and designed when the stakes are real.

This analysis is intended for founders, operators, executives, investors, senior professionals, advisors, and thoughtful individual contributors who prioritize clarity over noise in consequential decisions.

2. What We Mean by “Productivity” (and Why Definitions Matter)

Most productivity discussions collapse under imprecision. Without clear definitions, evidence is misapplied, interventions are mismatched to context, and failures are misdiagnosed.

At a minimum, productivity operates at three distinct levels.

Individual productivity concerns how a person converts effort and attention into task execution.

Team productivity reflects coordination quality—how effectively work moves across roles and dependencies.

Organizational productivity emerges from systems: structure, incentives, decision rights, and information flow.

Findings at one level do not automatically transfer to another. Practices that improve individual focus may harm team coordination; measures that raise short-term output may undermine long-term organizational performance.

A second distinction is temporal. Short-term output captures immediate throughput—tasks completed, hours logged, responsiveness. Sustainable performance captures whether quality, learning, and health can be maintained over time. Much productivity advice optimizes for the former while quietly degrading the latter.

Finally, productivity can be measured or merely perceived. Activity, busyness, and responsiveness are highly visible and often mistaken for effectiveness. True productivity—error rates, rework, judgment quality, and resilience—tends to surface only with delay.

To anchor the analysis that follows, this article uses a simple but critical framing:

Input → Attention → Execution → Output

Inputs (time, tools, people) do not produce results directly. They act through attention, which constrains execution quality, which determines output. Productivity improvements must therefore measurably alter one or more of these links—by reallocating attention, reducing execution friction, or improving system design.

This framing matters because it explains why many popular productivity tactics feel effective while failing empirically. They increase visible input or effort without improving attention allocation or execution quality.

Clarifying definitions at the outset prevents misapplication of evidence later. It ensures that when productivity improves, we can say not just that it changed—but why.

3. What Actually Improves Productivity (Evidence Review)

The strongest productivity gains do not come from motivation, tools, or intensity—but from how work is structured, attention is protected, and responsibility is distributed.

This section synthesizes decades of peer-reviewed research from cognitive psychology, organizational science, and field experiments to identify interventions with consistent, evidence-backed effects on productivity—particularly in knowledge work.

Productivity Improves When Self-Management Reduces Fragmentation

One of the most consistent findings across productivity research is that how individuals manage their workday matters more than the physical environment or digital tooling.

Multiple studies show that structured self-management practices—planning, prioritization, and deliberate interruption control—produce measurable improvements in both output quantity and quality. In controlled interventions, training knowledge workers in task planning, time allocation, and interruption management reduced perceived fragmentation and improved self-rated performance months later, indicating effects that persist beyond short-term motivation boosts.

Notably, these effects are not marginal. Across professional settings, structured time-management frameworks such as prioritization matrices, time-blocking, and anti-fragmentation practices are associated with 20–25% improvements in project completion speed, without corresponding increases in working hours.

Decision implication:

Productivity gains emerge not from “working harder,” but from reducing the cognitive cost of deciding what to do next.

Reducing Context Switching Is a High-Leverage Intervention

Cognitive psychology offers one of the clearest signals in productivity research: context switching reliably degrades performance.

Decades of task-switching research demonstrate a persistent “switch cost”—people become slower and more error-prone immediately after changing tasks, even when they expect the switch and have time to prepare. This cost arises from interference between task sets and the mental effort required to reconfigure attention.

Field studies reinforce this finding. In real-world settings such as software development, frequent task switching correlates with higher stress, reduced focus, and lower perceived productivity. Interruptions longer than just a few minutes significantly impair task resumption and increase the likelihood of abandoned work threads.

Task batching—the deliberate grouping of similar tasks—offers a partial countermeasure. Evidence from operations research, manufacturing, and computing consistently shows that batching similar work reduces setup and transition costs, improving throughput by 20–30% in some domains. When translated to knowledge work, batching cognitively similar tasks minimizes reconfiguration effort and preserves working memory continuity.

However, batching is not free. Excessively large batches or delayed switching can reduce responsiveness and introduce bottlenecks, mirroring latency tradeoffs observed in logistics and computing systems.

Decision implication:

Minimize unnecessary context switching, but balance batching against responsiveness requirements. Productivity is constrained by attention recovery costs, not effort.

Autonomy Improves Productivity—Within Structure

Across experiments, field studies, and meta-analyses, job autonomy shows a generally positive relationship with productivity and performance, mediated by motivation, ownership, and engagement.

Experimental evidence demonstrates that even short-term autonomy priming can increase productivity by measurable margins, while sector-specific studies (construction, healthcare, telecommuting) consistently show autonomy predicting higher job performance and innovation outcomes.

However, the relationship is not linear. Firm-level studies indicate curvilinear effects: very high autonomy can increase turnover or coordination breakdowns if not paired with role clarity, trust, and support. Autonomy delivers the strongest productivity gains when employees possess sufficient skills, understand performance expectations, and operate within psychologically safe environments.

Decision implication:

Autonomy increases productivity when it reduces decision friction—not when it removes structure entirely.

Sustained Productivity Depends More on Environment Than Incentives

Short-term productivity spikes often come from incentives or pressure. Sustained productivity does not.

Across sectors, research consistently finds that supportive work environments, fair leadership, and psychological well-being are among the strongest predictors of durable productivity. Factors such as lighting, ergonomics, noise control, interpersonal trust, and freedom from workplace hostility show strong correlations with performance over time.

Leadership quality matters disproportionately. Management support, procedural justice, and trust-based supervision reduce production loss during change and increase engagement, which in turn predicts higher output and lower burnout.

Critically, employee health mediates many of these effects. Poor environments degrade health, which then depresses productivity—even when skills and incentives remain constant.

Decision implication:

Sustainable productivity is an environmental outcome. Tools and incentives cannot compensate for chronic stress, poor leadership, or unsafe climates.

The Pattern Across Evidence: Productivity Improves by Reducing Friction, Not Adding Pressure

When viewed collectively, the research reveals a consistent pattern:

Productivity improves when:

• Cognitive fragmentation is reduced

• Attention transitions are minimized

• Responsibility is aligned with capability

• Work environments support focus and psychological safety

It declines when:

• Workdays are over-fragmented

• Multitasking is normalized

• Autonomy is granted without clarity

• Pressure substitutes for system design

This explains why many popular productivity interventions fail despite good intentions—they add effort without removing friction.

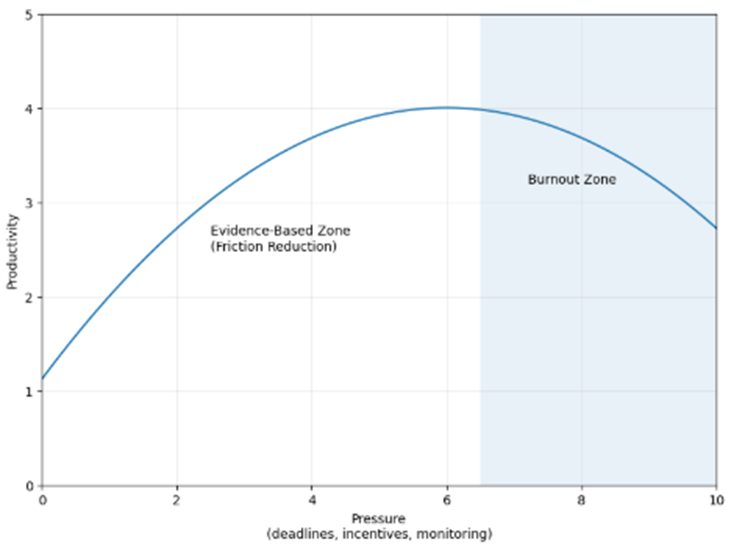

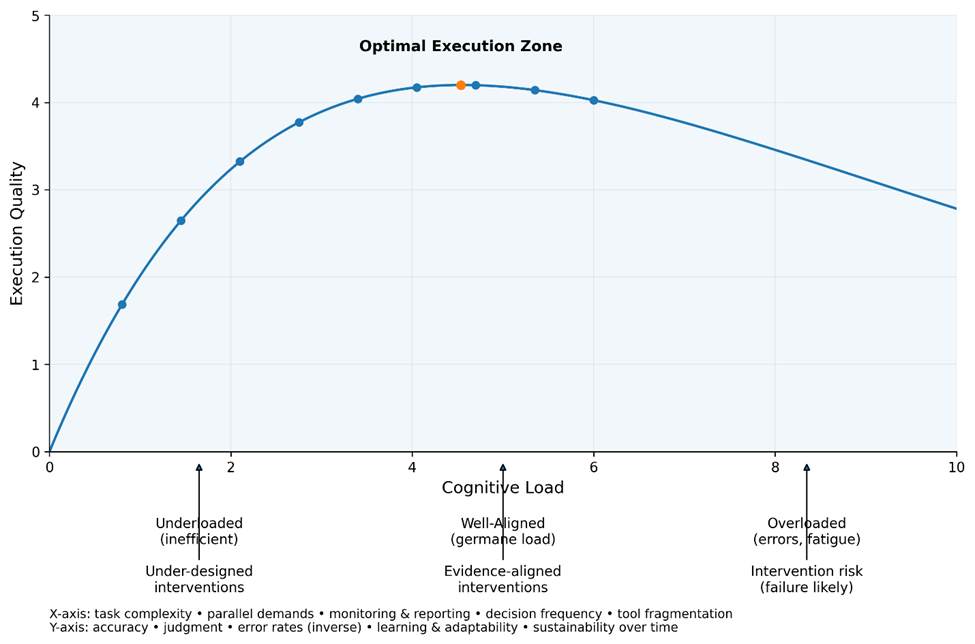

The Friction vs. Pressure Curve

The below chart shows why most productivity advice fails—and where evidence-based gains actually come from.

Figure 1. The Friction vs. Pressure Curve

Illustrates why productivity gains plateau and reverse when pressure increases without reducing underlying friction.

The Friction vs. Pressure

Most productivity interventions focus on increasing pressure—through tighter deadlines, incentives, tools, or monitoring. The evidence reviewed in this section suggests that this approach delivers diminishing returns.

At low levels of pressure, modest increases can raise output by clarifying priorities and reducing procrastination. However, once friction remains high—through task fragmentation, frequent context switching, unclear roles, or poor environments—additional pressure produces little sustained productivity gain.

Beyond a threshold, productivity declines as cognitive load, stress, and error rates increase. This is the burnout zone: output may appear high temporarily, but quality, retention, and long-term performance deteriorate.

In contrast, the evidence-supported interventions identified in this review operate primarily by reducing friction rather than increasing pressure. Improvements in self-management, task batching, autonomy with structure, and supportive environments shift the curve upward—allowing higher productivity at lower levels of pressure.

The central implication is that productivity is not maximized by pushing harder, but by designing work so less effort is wasted overcoming avoidable friction.

Evidence Synthesis: What the Research Consistently Shows

Across cognitive psychology, organizational science, economics, and field experiments, the evidence reviewed in this article converges on a small number of consistent findings:

- Productivity improves most reliably when cognitive fragmentation is reduced. Interventions that simplify task sequencing, clarify priorities, and reduce unnecessary decision-making consistently outperform those that increase effort or urgency.

- Frequent context switching imposes persistent performance costs that motivation and skill do not eliminate. Task switching degrades speed, accuracy, and judgment, even among experienced professionals and even when switching is intentional.

- Structured autonomy outperforms both micromanagement and unbounded freedom. Autonomy improves productivity when paired with role clarity, decision rights, and execution structure; without these, coordination and performance deteriorate.

- Sustained productivity is more strongly associated with work environment and leadership quality than with incentives or tools. Psychological safety, fairness, and supportive conditions explain more long-term performance variance than short-term pressure or rewards.

- Common productivity tactics fail when they increase pressure without removing friction. Multitasking, extended working hours, and excessive tooling often raise visible activity while degrading output quality and sustainability.

- Taken together, the evidence suggests that productivity is predominantly an execution and system design outcome. Once baseline capability is present, differences in productivity are explained more by how work is structured, coordinated, and evaluated than by individual effort, motivation, or tool adoption.

This synthesis does not imply that effort, skill, or tools are irrelevant. Rather, it shows that their impact is contingent: they amplify or constrain performance depending on the execution system in which they operate.

4. What Doesn’t Work (Despite Popularity)

Many widely promoted productivity tactics persist not because they work, but because they feel intuitively right under pressure.

The research reviewed in this section converges on a consistent pattern: several of the most common productivity strategies either fail to improve real performance or actively degrade output quality over time. These approaches often survive because they increase visible effort, urgency, or activity—while masking deeper execution costs.

Multitasking Reduces True Productivity, Even When It Feels Efficient

Across cognitive psychology, workplace studies, and experimental economics, the evidence is clear: multitasking reliably lowers performance on complex, cognitively demanding work.

Laboratory and field studies show that performing multiple demanding tasks concurrently slows completion time and increases error rates compared to sequential task execution. Meta-analytic evidence on media multitasking finds a medium-to-large negative effect on comprehension, memory, and problem-solving—core components of knowledge work performance.

The underlying mechanism is well established. Human cognitive capacity is limited, and frequent task switching imposes “switch costs”: time delays, increased mistakes, and higher cognitive load. These costs persist even when individuals believe they are good multitaskers or choose their own task order.

There are narrow exceptions. In settings involving many small, simple tasks, limited multitasking can increase total throughput up to a point. Some studies also find that multitasking may increase subsequent creativity, likely through heightened cognitive activation rather than improved execution of the multitasked work itself. However, these effects are modest and do not generalize to deep, complex work.

Why the myth persists:

Multitasking increases the feeling of busyness and progress, even as objective performance and focus decline.

Longer Working Hours Do Not Improve Output Quality Beyond a Threshold

Another persistent belief is that working longer hours increases productivity or at least preserves quality. The evidence does not support this.

Across sectors, studies consistently show diminishing—and eventually negative—returns to extended working hours. Performance follows an inverted U-shaped relationship: productivity and quality rise with workload up to a moderate point, then flatten or decline as fatigue accumulates.

Field data demonstrate that longer daily hours often reduce hourly productivity, increasing handling time per task and lowering efficiency. In healthcare and other high-stakes environments, extended hours are associated with higher error rates, communication failures, and adverse outcomes—alongside burnout and health deterioration.

Health effects matter because they directly mediate performance. Long working hours degrade sleep quality, vitality, and self-rated health, all of which are strongly linked to poorer job performance and higher error risk. Even personality traits associated with high effort, such as perfectionism, show only marginal performance gains from longer hours.

There is limited evidence that increasing hours from very low baselines can improve output by reducing underutilization or supporting learning. However, once moderate workloads are exceeded, additional hours tend to erode quality rather than enhance it.

Why the myth persists:

Long hours signal commitment and effort, even when they reduce effectiveness.

Productivity Apps Deliver Modest Gains—And Often Plateau or Backfire

Digital productivity tools—task managers, communication platforms, AI assistants—are frequently marketed as productivity multipliers. The evidence suggests a more constrained reality.

Across studies, productivity apps are associated with small-to-moderate improvements in self-reported productivity and task efficiency, particularly when tools are well-designed, integrated into workflows, and matched to task requirements.

However, benefits are not automatic. Overuse of communication and collaboration tools often increases information overload, stress, and distraction, offsetting gains. In remote and hybrid settings, productivity outcomes vary widely: optional or well-supported remote work can improve performance, while mandatory, high-intensity digital work—especially with excessive meetings—often shows neutral or negative effects.

Crucially, many interventions improve perceived productivity more than objective performance. Systematic reviews find that while tools may improve organization or health-related outcomes, they frequently fail to produce measurable productivity gains due to poor implementation, short evaluation windows, or crude metrics.

Why the myth persists:

Apps increase visibility and activity, creating an illusion of control—even when cognitive load rises.

The Common Failure Pattern

Across multitasking, long hours, and productivity tools, a common pattern emerges:

These approaches attempt to increase productivity by adding pressure, activity, or intensity without removing underlying friction—such as cognitive overload, poor task design, unclear priorities, or unhealthy environments.

As a result, they often raise effort while suppressing sustainable output quality.

This explains why many individuals and organizations feel perpetually busy yet struggle to produce high-quality work consistently.

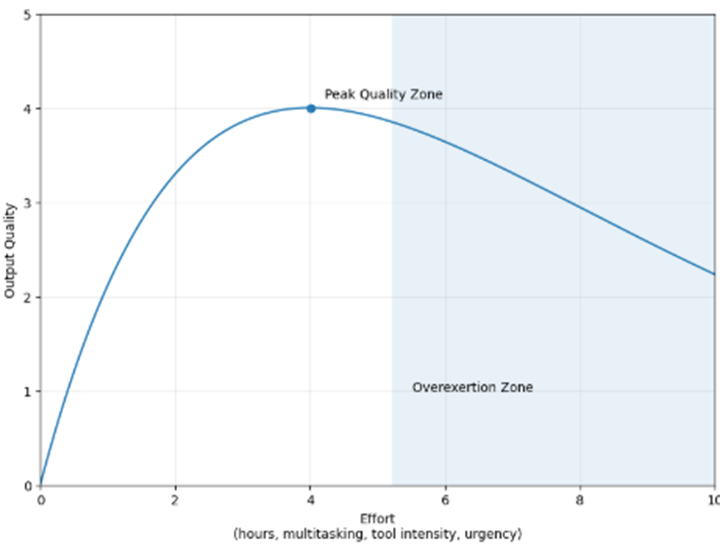

Effort vs. Output Quality Curve

The below chart explains why multitasking, long hours, and excessive tooling feel productive—yet often degrade real output quality.

Figure 2. Effort vs. Output Quality

Shows the inverted relationship between effort and output quality, with performance peaking at moderate effort and declining as overload and fatigue increase.

Effort vs. Output Quality

Research across cognitive psychology and organizational studies shows that output quality follows an inverted relationship with effort. As effort increases from low levels—through moderate workload, focus, and engagement—output quality improves. However, beyond a threshold, additional effort reduces quality as fatigue, cognitive overload, and coordination costs accumulate.

Common productivity tactics such as multitasking, extended working hours, and intensive tool use push work into the overexertion zone. In this region, visible activity increases, but accuracy, learning, and sustainable performance decline.

The implication is not that effort is unimportant, but that effort beyond structural limits degrades judgment and execution quality. Productivity interventions that ignore this relationship often trade short-term activity for long-term performance loss.

5. Why Productivity Interventions Fail

Most productivity initiatives fail not because the ideas are wrong, but because the system they are inserted into is misaligned.

Despite decades of experimentation, organizations repeatedly deploy productivity interventions—new tools, metrics, incentives, or policies—with disappointing results. The research reviewed in this section shows that failure is rarely random. Instead, it follows a small number of predictable structural patterns that undermine execution.

Interventions Fail When They Add Activity Without Removing Friction

A consistent finding across organizational studies is that productivity interventions often increase visible activity while leaving underlying execution barriers intact.

Many initiatives introduce new processes, reporting requirements, or tools intended to improve efficiency. In practice, these additions frequently increase coordination costs, cognitive load, and administrative overhead. Employees spend more time managing the intervention itself—updating systems, complying with metrics, attending meetings—while the original sources of inefficiency remain unresolved.

When interventions fail to address core constraints—such as unclear priorities, task fragmentation, or overloaded decision pathways—they tend to shift work rather than improve it. Output may appear higher in the short term, but execution quality and sustainability deteriorate.

Failure pattern:

Interventions target symptoms (speed, volume, visibility) instead of structural causes (clarity, sequencing, capacity).

Incentives and Metrics Distort Behavior When They Replace Judgment

Research on incentives and performance measurement shows that what gets measured and rewarded strongly shapes behavior, often in unintended ways.

When productivity is proxied through narrow metrics—hours logged, tasks completed, responsiveness, utilization—employees rationally optimize for the metric rather than for true value creation. This leads to gaming, short-termism, and risk avoidance, even when overall performance indicators appear to improve.

Incentives can also crowd out intrinsic motivation. Studies consistently find that excessive performance pressure or poorly designed rewards reduce learning, experimentation, and discretionary effort—particularly in knowledge-intensive roles where output quality matters more than throughput.

The result is a paradox: organizations invest heavily in measurement systems to improve productivity, yet those same systems can erode the very judgment and ownership required for effective execution.

Failure pattern:

Metrics become targets; targets replace thinking.

Organizational Context Determines Whether Interventions Translate Into Execution

Even evidence-backed interventions fail when organizational conditions are hostile to execution.

Across sectors, research identifies several contextual factors that reliably reduce execution effectiveness:

• Role ambiguity and conflicting priorities

• Low trust between employees and leadership

• Weak feedback loops and delayed learning

• Psychological insecurity and fear of error

• Fragmented decision authority

In such environments, employees may understand what to do but lack the clarity, autonomy, or safety required to execute consistently. Productivity interventions introduced into these contexts often amplify stress and disengagement rather than improving outcomes.

Importantly, many organizations misattribute these failures to resistance or skill gaps, when the evidence points to system-level misalignment between expectations, authority, and support.

Failure pattern:

Execution breaks down when responsibility exceeds control.

The Common Thread: Productivity Is Treated as a Tool Problem, Not a System Property

Taken together, the research shows that productivity interventions fail when organizations:

• Isolate tactics from context

• Substitute measurement for judgment

• Increase pressure without redesigning work

• Ignore execution capacity and learning dynamics

Productivity is not a feature that can be installed. It is an emergent property of how decisions, incentives, and environments interact over time.

This explains why many organizations cycle through successive waves of productivity programs with diminishing returns: each new intervention adds complexity to a system already operating near its cognitive and coordination limits.

Implication for Decision-Makers

The central implication is not that productivity interventions are futile, but that their success depends less on the intervention itself and more on the system into which it is introduced.

Interventions that align incentives with judgment, reduce friction rather than add activity, and respect execution constraints are far more likely to improve real performance. Those that do not tend to produce motion without progress.

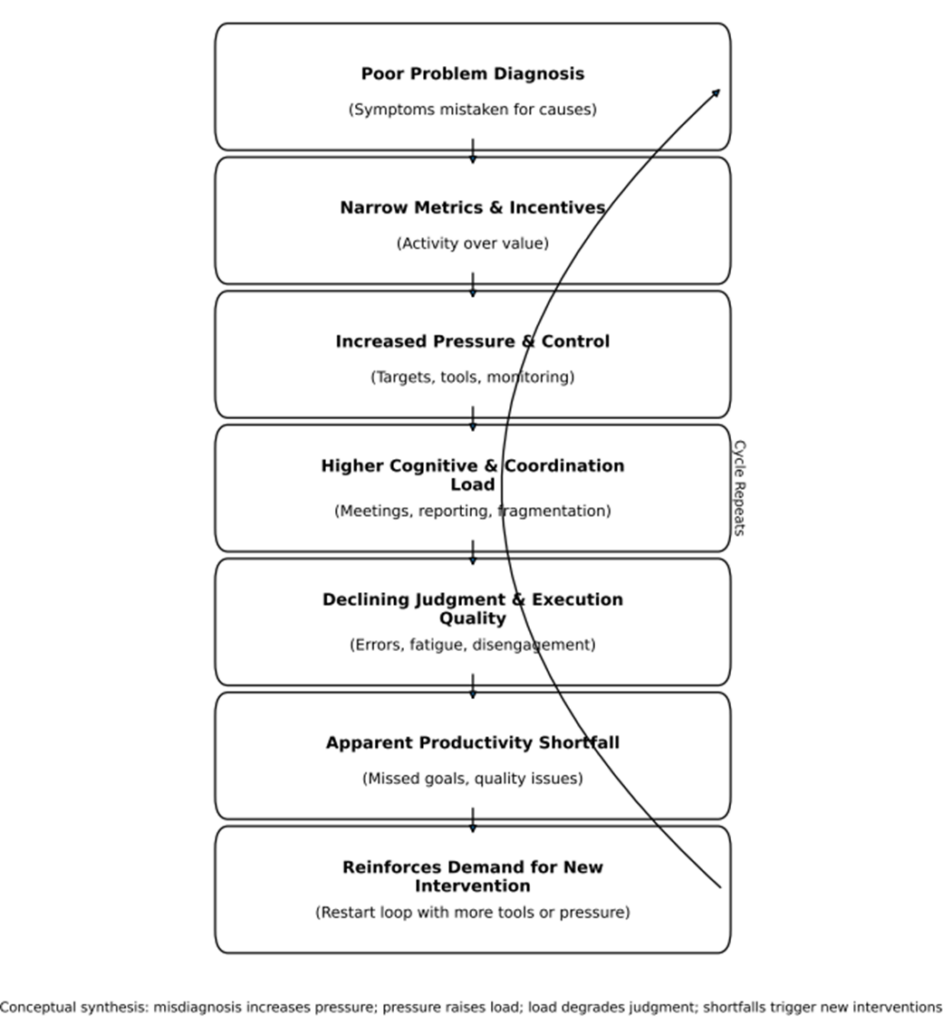

Figure 3. The Productivity Intervention Failure Loop

Shows a recurring cycle in which misdiagnosis and pressure-based interventions increase cognitive load, degrade execution quality, and trigger further ineffective interventions.

Why Productivity Interventions Fail

The Productivity Intervention Failure Loop figure illustrates a recurring failure loop observed across organizational productivity research. Interventions often begin with misdiagnosis—treating visible output shortfalls as effort or discipline problems rather than execution or system constraints. Narrow metrics and incentives then amplify pressure without reducing friction, increasing cognitive and coordination load. As judgment and execution quality decline, performance appears to worsen, reinforcing the belief that another intervention is required. The cycle repeats, accumulating complexity while eroding effectiveness.

6. Decision Frameworks: How to Evaluate Productivity Interventions Before They Fail

The central mistake in productivity decisions is not choosing the wrong intervention—it is choosing without a framework that respects execution limits.

Most productivity initiatives are evaluated after deployment, using lagging indicators and narrow metrics. The research suggests a different approach: productivity interventions should be evaluated ex ante, using decision frameworks that clarify where an intervention acts, what it loads cognitively, and how execution quality is likely to change.

What Existing Evaluation Models Get Right—and Where They Fall Short

Across fields, three families of models are commonly used to evaluate productivity interventions.

Logic and multilevel impact models trace causal chains from inputs and activities to outputs, outcomes, and broader impacts. These models help decision-makers identify whether an intervention targets processes, behaviors, or structural conditions—and at what organizational level it operates.

Productivity measurement models expand beyond simple output counts, incorporating absenteeism, presenteeism, engagement, and task-level performance. In knowledge work, multi-dimensional benchmarking tools are particularly useful for detecting whether apparent productivity gains reflect real execution improvements or merely shifts in effort and reporting.

Economic evaluation frameworks translate productivity changes into monetary terms using human-capital, friction-cost, or output-based methods. These models are valuable when decisions require financial justification, but they often rely on coarse proxies that obscure execution quality and learning effects.

Taken together, these models improve rigor. However, they share a common limitation: they largely treat productivity as an outcome variable, not as a constrained execution process.

Why Cognitive Load Must Be Central to Any Evaluation Framework

Research on cognitive load provides a missing link between intervention design and execution outcomes.

Across domains—manufacturing, healthcare, finance, interviewing, and complex decision-making—higher cognitive load reliably degrades execution quality. Increased load slows performance, increases error rates, and pushes behavior toward faster but less controlled responses, particularly under dual-task or time-pressured conditions.

Importantly, the relationship is non-linear. Moderate, task-relevant (“germane”) load can sharpen attention, support learning, or improve precision in skilled performers. Excessive or misaligned load, however, overwhelms working memory and executive control, degrading judgment and coordination.

Expertise moderates these effects. Skilled individuals tolerate higher load with smaller performance penalties, while novices experience sharp declines. This explains why identical productivity interventions succeed in some teams and fail in others—capacity, not effort, is the binding constraint.

Key implication:

Any evaluation framework that ignores cognitive load risks approving interventions that look efficient on paper but degrade execution in practice.

A Decision-First Way to Evaluate Productivity Interventions

Synthesizing these findings suggests a shift from tool-centric evaluation to decision-centric evaluation.

Before adopting a productivity intervention, decision-makers should be able to answer five questions:

- At what level does this intervention act?

(Individual task execution, team coordination, organizational structure) - What friction does it remove—or what load does it add?

(Cognitive, coordination, informational, emotional) - How does it change cognitive load distribution?

(Does it simplify decisions, or introduce parallel demands?) - Who has the expertise to absorb the load?

(And who does not?) - Which metrics will reflect execution quality, not just activity?

These questions are not substitutes for formal models; they are filters that determine whether formal evaluation results are meaningful.

Why Many “Validated” Interventions Still Disappoint

The research reviewed earlier explains a persistent paradox: interventions can score well under standard evaluation models yet still fail in execution.

Logic models may confirm causal alignment. Measurement frameworks may show increased activity. Economic models may estimate positive returns. Yet if the intervention increases cognitive load beyond execution capacity—through multitasking, excessive monitoring, or poorly integrated tools—performance degrades despite favorable indicators.

This is not a failure of evidence. It is a failure of decision framing.

Implication for Productivity Decisions

The most reliable productivity gains come not from selecting the “best” intervention, but from matching interventions to execution capacity.

Decision frameworks that integrate:

• multilevel causal thinking,

• multidimensional measurement,

• and explicit cognitive load assessment

are far more likely to produce sustained improvements in output quality and performance.

Productivity, in practice, is less about doing more—and more about designing work so fewer decisions compete for limited cognitive resources.

Cognitive Load and Execution Quality Curve

As illustrated in Figure 4, productivity interventions should be evaluated by how they shift cognitive load relative to execution capacity—not merely by their intended efficiency gains.

Figure 4. The Cognitive Load and Execution Quality Curve

Shows execution quality peaking when cognitive load is well-aligned with task demands and expertise, and declining when load is under- or over-applied.

In the Cognitive Load and Execution Quality curve, execution quality follows a non-linear relationship with cognitive load. When load is too low, capacity is underutilized and execution is inefficient. When load is well-aligned with task demands and expertise, execution quality peaks. Beyond this optimal zone, excessive or misaligned load degrades judgment, increases errors, and reduces sustainability.

Productivity interventions should be evaluated based on where they push work along this curve. Interventions that add cognitive load without removing friction risk moving execution into the overload zone—even if they perform well under traditional productivity metrics.

7. Implications for Leaders, Professionals, and Organizations

The evidence reviewed throughout this article points to a consistent conclusion: productivity improves not through intensity or novelty, but through disciplined judgment about how work is designed, evaluated, and sustained. The implications differ slightly by role.

For Knowledge Workers

Reduce Unnecessary Doing:

Equating busyness with effectiveness. Multitasking, constant responsiveness, and extended hours create the appearance of productivity while quietly degrading execution quality.

Test carefully:

Work structures that reduce fragmentation—fewer concurrent tasks, clearer sequencing, and bounded attention demands. The evidence suggests that modest changes in how work is organized can produce outsized gains relative to additional effort.

Measure differently:

Focus less on volume and responsiveness, and more on error rates, rework, and recovery. Sustained output quality is a more reliable indicator of real productivity than activity counts.

For Managers

Reconsider:

Using pressure, monitoring, or narrow metrics as substitutes for clarity. When measurement replaces judgment, teams optimize for signals rather than outcomes.

Test carefully:

Interventions that remove friction rather than add process—especially those that improve role clarity, coordination, and cognitive load alignment. The same intervention can succeed or fail depending on execution capacity.

Measure differently:

Track execution quality over time, not just short-term throughput. Indicators such as handoff failures, escalation frequency, and learning cycles often reveal more than traditional productivity dashboards.

For Founders and Executives

Reconsider:

Treating productivity as a cultural trait or motivational problem. Evidence consistently shows that effort and intensity cannot compensate for misaligned systems.

Test carefully:

Changes to structure, incentives, and decision rights before introducing new tools or targets. Productivity is highly sensitive to how authority, accountability, and information flow interact.

Measure differently:

Look beyond aggregate output to system health: decision latency, coordination costs, and error propagation. These factors often determine whether growth amplifies performance or fragility.

8. Productivity as a Judgment Discipline

The central lesson from decades of research is deceptively simple: productivity is not about doing more—it is about deciding better.

Productivity gains come from fewer, higher-quality decisions about how work is structured, how attention is allocated, and how execution is evaluated. Evidence consistently outperforms intensity. Pressure without redesign raises activity but erodes judgment. Tools without alignment add load but not capacity. Metrics without context distort behavior rather than improving outcomes.

Most importantly, sustainable productivity is not a personality trait. It is a systems outcome—emerging from the interaction of cognitive limits, organizational design, incentives, and environment. When those elements align, productivity rises with less effort. When they do not, no amount of motivation can compensate.

Signal Journal exists to make these distinctions clear. Its commitment is to slow, accountable thinking: synthesizing evidence, challenging myths, and clarifying how decisions with real consequences should be made.

Future research in the Journal will extend this work—examining productivity in financial decision-making, execution under uncertainty, and how measurement systems shape long-term outcomes. The goal remains constant: to separate signal from noise where the stakes are real.

Tracking sales/employee monthly (per our definition article) + applying these interventions = 15-25% margin expansion.

Which signal will you audit first?

Research Foundation

This article synthesizes findings from peer-reviewed research across productivity science, organizational behavior, management studies, cognitive psychology, and strategy execution research. The evidence base integrates studies on knowledge-worker productivity practices, task switching and multitasking effects, job autonomy and motivation, workplace environment and leadership quality, workload and working-hour dynamics, incentive systems, and the role of digital tools in modern work.

A large body of research shows that execution quality is strongly shaped by cognitive load. Excessive task switching, fragmented workflows, and competing demands increase cognitive load and are consistently associated with slower performance, higher error rates, and degraded decision quality, while well-aligned and moderate load can support focused execution and learning.

Research on productivity interventions further indicates that many initiatives fail not because the tools are ineffective but because of misdiagnosis, narrow metrics, weak leadership alignment, and poorly designed organizational systems. These dynamics often create reinforcing failure cycles in which increased pressure and monitoring raise cognitive and coordination load, ultimately reducing execution quality and triggering further interventions.

The conceptual figures presented in this article—the Optimal Execution Zone and the Productivity Intervention Failure Loop—represent a synthesis of these literatures, illustrating how cognitive load, measurement systems, organizational design, and leadership practices interact to influence sustained productivity outcomes.

The article therefore represents a cross-disciplinary research synthesis, translating empirical findings from economics, psychology, and management research into practical insights for leaders seeking sustainable productivity improvement and stronger organizational performance.

Selected References

Antony, J., & Gupta, S. (2019). Top ten reasons for process improvement project failures. International Journal of Lean Six Sigma.

Aral, S., Brynjolfsson, E., & Van Alstyne, M. (2011). Information technology and information worker productivity. Information Systems Research.

Biondi, F., Cacanindin, A., Douglas, C., & Cort, J. (2020). Investigating the effect of cognitive workload on task performance. Human Factors.

Diamantidis, A., & Chatzoglou, P. (2019). Factors affecting employee performance: An empirical approach. International Journal of Productivity and Performance Management.

Fernandes, R., Carey, D., Bortoluzzi, B., & McArthur, J. (2019). Benchmarking knowledge-worker productivity: A multidimensional measurement tool. Intelligent Buildings International.

Jeong, S., & Hwang, Y. (2016). Media multitasking effects on cognitive outcomes: A meta-analysis. Human Communication Research.

Kianto, A., Shujahat, M., Hussain, S., Nawaz, F., & Ali, M. (2018). The impact of knowledge management on knowledge worker productivity. Baltic Journal of Management.

Monsell, S. (2003). Task switching. Trends in Cognitive Sciences.

Palvalin, M., Voordt, T., & Jylhä, T. (2017). Self-management practices and knowledge-worker productivity. Journal of Facilities Management.

Sauermann, J. (2023). Performance measures and worker productivity. IZA World of Labor.

Steel, J., Godderis, L., & Luyten, J. (2018). Productivity estimation in economic evaluation of workplace interventions. Scandinavian Journal of Work, Environment & Health.